The question

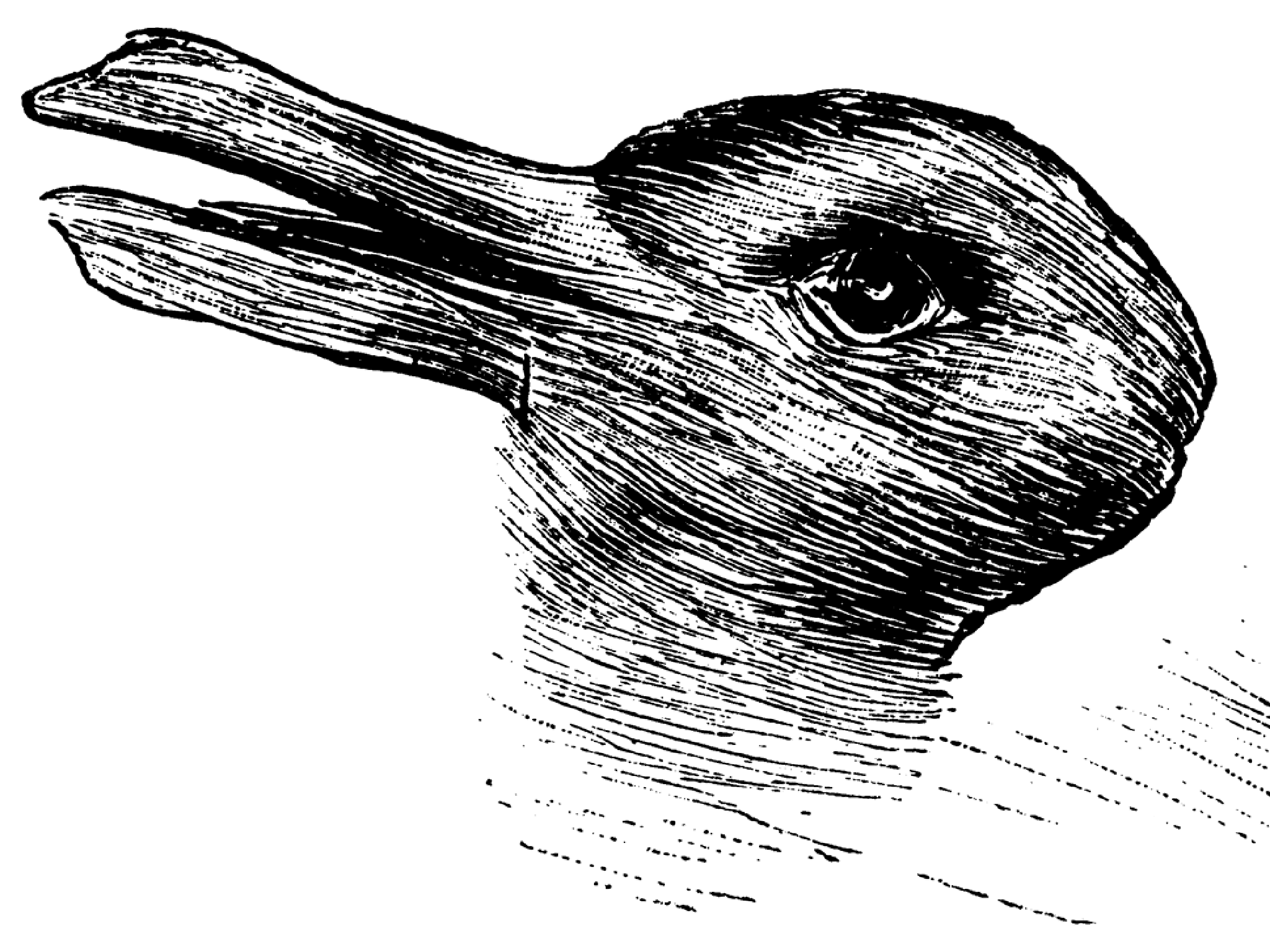

The duck-rabbit illusion has one image, two valid answers, and no correct one. That's what makes it useful, not as a trick, but as a test. I used it to ask: when CLIP looks at an ambiguous image, where does it land? And can I push it?

The duck-rabbit illusion is a classic example of bistable perception; your brain can hold either interpretation, and both are equally valid. Unlike most classification tasks where there's a ground truth, this illusion has none. That's what makes it interesting as a research tool: if CLIP has to pick one, what does it pick, and why?

What I did

CLIP maps images and text into the same embedding space, so "duck" and "rabbit" both exist as points, and so does the illusion image. I expected the illusion to sit somewhere in between the two, geometrically caught between both categories. I used PCA to reduce those high-dimensional embeddings to 2D so I could actually see this.

Then I tried to bias it.

In humans, perception shifts with color, cropping, and framing. I ran the same kinds of manipulations on the image, coloring specific regions red, green, and blue, cropping to emphasize duck or rabbit features, shifting the background, and measured whether CLIP's similarity scores moved toward rabbit or duck.

What I found

Coloring the left half biased CLIP toward rabbit. The right half pushed it toward duck. Same color, different location: opposite result. Full-image colorizations did almost nothing, which tells you CLIP isn't responding to global appearance. It's responding to local spatial structure, and that structure maps directly onto where the duck and rabbit features actually live in the image.

Cropping did the same thing. Isolate the bill region, similarity scores shift toward duck. Isolate the ears, they shift toward rabbit. This is exactly how human selective attention works, where you look determines what you see.

The classification can flip on a cosine similarity difference of 0.002. The illusion is sitting right on the decision boundary, and that instability is the point. Humans experience these illusions the same way, perception is unstable, context-dependent, and easy to tip!

Why it matters

Optical illusions are a window into how perception works, in humans, and apparently in models too. CLIP takes shortcuts, just like we do. The question is whether those shortcuts are grounded in anything like human cognition, or something else entirely. This project is a small step toward making that visible.